One of the big meta themes from this year’s IBC was the rise of Artificial Intelligence and how AI TV and Machine Learning will impact every level of the industry.

One of the big meta themes from this year’s IBC was the rise of Artificial Intelligence and how AI TV and Machine Learning will impact every level of the industry.

On 4th October, Google took over the SF Jazz Club in San Francisco for a widely-trailed hardware launch event that introduced the Pixel 2 phone to the world. Interesting though the new phone is, what really seemed to snag the attention of many commentators though was a new, unheralded product announced towards the end of the presentation.

Google Clips (pictured) is a $249, 12 megapixel camera that relies on AI routines to take pictures. The camera asks itself if anything interesting is happening in front of it and, if it is — this is usually the appearance of a face in front of it that it recognises — it takes a picture. Indeed, initial prototypes of the camera didn’t have a shutter button on it at all, it was only market testing outside of the organisation that persuaded the company that it was psychologically necessary for many users to at least have access to one.

As you can tell from that anecdote, Google has embraced AI in a big way. The first 10 minutes of its San Francisco presentation were all about how Moore’s Law was being left behind and that the future lay at the intersection of 'AI + software + hardware’. And in doing this it was only echoing one of the main themes to come out of this year’s IBC.

AI TV: The Story so Far

As with VR, AR and Mixed Reality, it’s worth backing up a bit and checking the definitions currently in circulation as they can often be used interchangeably (though AI is often used as the catch-all term).

Definition of Artificial Intelligence: Any software or device that reacts to its environment and alters its actions to maximize its chances of success at a given task. It thus appears intelligent.

Definition of Machine Learning: Algorithms that can learn from data inputs and make accurate predictions/decisions as a result.

Definition of Deep Learning: The construction of evolving neural networks to analyse large amounts of data and produce conclusions.

As Bernard Marr wrote in Forbes, these can be thought of as an evolutionary process themselves: ML represents the current state-of-the-art in AI, while DL represents the cutting-edge-of the cutting-edge and is the technology driving Amazon and Netflix’s data analytics systems.

This is inevitably where a lot of the interest from the broadcast industry is concentrated. Speaking at IBC, Adrian Drury, technology strategy and insight director at Liberty Global, said that AI had the potential to disrupt all aspects of a broadcaster’s business.

“It will be important in pulling insights out of video content, for automatic metadata generation, smart search and even for monetization – finding out what content and ads consumers are most interested in,” he said.

So much, so expected. Much of the development from AI to DL has been driven by the requirements of Big Data. But AI will also penetrate the industry at other levels too.

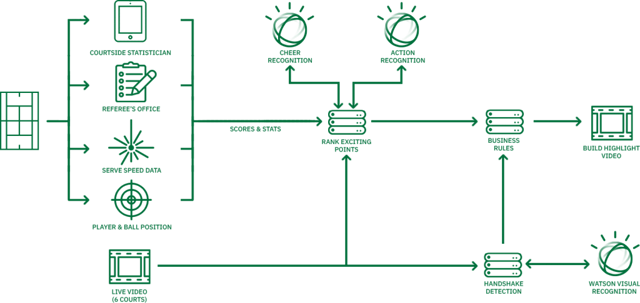

At this year’s Wimbledon tennis tournament, for instance, IBM provided what it termed ‘cognitive highlights’ of The Championships. The system in fact built upon one developed for analysing golf video content at the 2017 Masters. But, whereas that one selected and curated individual segments for an editor to choose from, the Wimbledon system automatically created one to two minute highlight packages from six courts for use across the tourney’s digital platforms.

The diagram below illustrates the workflow and you can read more about the way the system works in this IBM blog post here, but here’s a quick extract.

“The IBM Research team trained the system to recognize crowd cheering and the player’s reaction using videos of matches of previous tournaments, with associated contextual metadata from the on-court statistician to filter out specific content. The technology relies on state-of-the-art deep learning models which provides effective methods for learning new classifiers using a few manually annotated training examples, using active learning techniques.”

Taking AI Further into TV

AI, of course, has already made forays into the editing world, with the IBM team charged with putting together a trailer for the Fox horror movie, Morgan, earlier this year.

The result is probably best described as serviceable, and this seems to be the level AI production techniques are currently being pitched at. David Shield, Senior Vice President and Global Director of Engineering and Technology for IMG’s sports production division, talks about teaching computers how to emulate certain simple tracking moves in soccer matches.

“We will probably always have 25 cameramen around a Premier League football match but at League 2 level, for example, we could have automated remote coverage with fixed cameras that would be difficult to distinguish from a fully produced game,” he told IBC365. "Rather than put anyone out of a job, AI should democratise the amount of coverage we get and help to bring down the cost of high-quality production.”

Activity is ramping up. There are a growing amount of AI projects in the broadcast industry from both established players and start-ups currently looking at integrating AI and ML into established workflows. Monitoring, logging, QC, versioning, transcribing, translating, subtitling… all of the aspects of television production that tend to follow specific processes and set workflows are ripe for the new AI-driven automation.

Find out more about VO's TV Business Analytics here.

Some of the more creative aspects of the industry might take longer to be integrated into the new intersection of AI + software + hardware, and some may be resistant entirely until we reach a level of artificial intelligence that genuinely mirrors our own, but the process is underway and feels as inexorable as the industry’s move towards IP production.

And, as we explore the interface of AI & TV, that means we can probably expect the odd surprise along the way too.