In the current broadcast industry, progress is not only rapid, it’s accelerating. Nowhere is this more evident than in the field of data analytics, the impact of which is growing every year.

In the current broadcast industry, progress is not only rapid, it’s accelerating. Nowhere is this more evident than in the field of data analytics, the impact of which is growing every year.

Indeed, it’s hard to understate the importance of big data analytics when operators worldwide are exploiting big data to understand their audiences better, unleash the power of addressable advertising, and reduce churn.

So, what are the key trends that can be isolated from analytics deployments, both the existing ones and the ones in development? Is big data really the path to great success? The short answer is yes, but there are some important considerations that need to be taken into account along the way.

Baked-in data privacy is crucial

A synthesis of the discussions and debates we've had in recent times with customers and experts in the field starts with the fact that the importance of privacy cannot be overstated. It is widely considered to be a main contributing factor in the delayed roll-out of analytics solutions in the industry. And with legislators watching keenly, concerns have arisen about precisely what data can be used, and how.

Lawmakers are just starting to catch up with recent developments that have taken place on the internet. The European Union’s rollout of its General Data Protection regulations in May this year will be extremely significant. This is not only for operators in Europe, or who want to reach European audiences, but it may well act as a template for legislation in other parts of the world. Read our blog post GDPR: The Implications for TV Service Providers to find out more.

The GDPR aside, research suggests that consumers have a very low engagement rate with terms and conditions or privacy policies. Only 0.05% of agreements are accessed by consumers before consent is given. As a consequence, opt-ins need to be explicit.

There are also a range of best practice and/or mandated procedures that need to be adhered to, such as anonymizing data and destroying it after analysis.

Consumers as yet have little knowledge of how much data is being collected about them and how it is being used (though the expectation is that the publicity surrounding the implementation of the GDPR will change that). As products and campaigns begin to leverage personal information, the industry needs to be mindful of developing concerns. While consumers are used to providing data on the internet, this is usually for free services such as social media. Attitudes towards a Pay-TV provider who already charges a monthly subscription may be much harder.

Needless to say, security in data processing is vital. This is especially important given the prevalence of using cloud computing resources to process large data sets. Data breaches were considered unacceptable and capable of generating impressive amounts of bad publicity before the GDPR. After it, they also can lead to the imposition of hefty fines, with even what the regulations term as ‘minor’ offences attracting punishments in the order of €10 million or 2% of global turnover, whichever is the greater. Third party datasets are also often combined together, so exemplary security processes need to be adopted to assure suppliers of the integrity of their data.

The importance of big data analytics: it’s not magic

There is debate over exactly what big data can and cannot do. Netflix’s use of it in the commissioning process is now considered by many in the industry to have been over-stated by early proponents of data analytics. It's a factor, probably a very important one (Netflix plays its cards very close to its chest when it comes to talking about such things), but it is not the sole source of green lighting productions. So, what is big data used for? A number of things. Internally it can be used to better understand what customers want. It doesn't guide the commissioning process, but it can inform it and lead operators to make better content decisions. This can, of course, also apply to maintaining catalogue and licensing content.

Operators are using it to identify customers at risk of churn, to reach out with individually tailored messages and more. This has always been a part of the business, but what is changing now is that the reaction to behaviors is becoming close to real time. Old methods of analyzing behavior could have a months-long lag, after which, for customers looking to leave a service, the matter may already have been decided.

These customers can now be intercepted before the desire for cancellation becomes actionable.

Addressable advertising

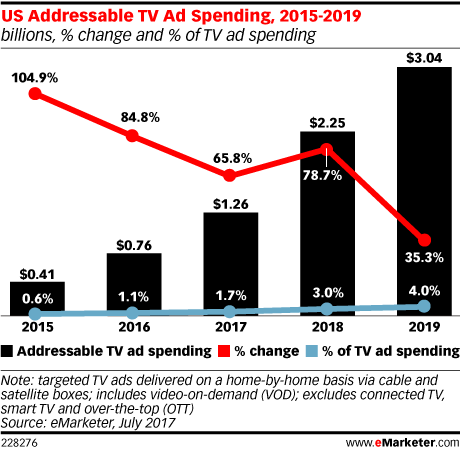

In terms of outbound activity, addressable advertising is considered to be one of the most powerful uses of data analytics. As yet it only represents a small amount of total ad spend (eMarketer figures suggest it accounted for 1.7% of total TV ad spend in the US last year, for instance) but it has enormous potential. And it is growing, in part because it allows geographic segmenting. While all sorts of interesting socio-demographic datasets are available, pinpointing the location of viewers and mapping that to geographic restrictions of businesses is what is unlocking revenue streams for operators.

Sky’s pioneering AdSmart service, for example, now has a potential audience of 30 million in the UK following a tie up with the broadcaster’s pay-TV rival Virgin Media last summer. And that customer base can be targeted a huge number of individual ways at household level, from financial attributes to whether the property has a south-facing garden. The advertiser pays on a cost per impression basis when the advert is seen (three quarters of the ad has to be seen to count as an impression).

It is a clever solution. AdSmart will play out current ads when old content is being viewed at normal speed on a Sky STB. And after a certain viewing threshold is reached, it will also start serving the same ads on devices associated with the household account.

All this effectively allows local businesses to advertise on national channels. And with prices starting at £3000 per campaign, and all that money related to distinct impressions, it is opening up new revenue streams. Indeed, AdSmart is currently helping Sky consistently outperform the wider UK advertising market.

Indeed, according to eMarketer again, US addressable TV ad spending is expected to reach $3.04 billion in 2019, more than double its 2017 level of $1.26 billion and up to 4% to total TV ad spending.

The importance of multiple datasets in big data analytics

Much of the data that AdSmart uses is third party data, and it is important for opoerators looking to maximise their big data value to realize how much can be gained from bringing existing datasets together. The service started with 400 targeting attributes when it launched in 2014. It now features over 1400. Operators can really start to obtain value when multiple parameters can be cross-referenced and contextualized. This is enhanced when their own data is included.

However, a lack of standardization is a problem and means that datasets do not necessarily merge smoothly. As a result, fragmentation and limited footprints can be an issue, particularly over large territories such as the US. Transparency must also be built into the process, so that all parties, the end-user included in GDPR territories, are aware of exactly what the data is going to be used for.

The importance of big data analytics will only grow

We are still in the early days of data analytics use in the broadcast industry, but the benefits are already clear. Those exploiting it now have better visibility into the performance of their content and better understanding of customer behavior (including at risk categories).

Crucially, as well as the promise of addressable advertising, it also allows for increasingly reliable predictions to be made. While Netflix might not be commissioning content solely based on big data, it is certainly using it to power its sophisticated recommendation engines. This ensures that customers are served with the content they want to see first. With an increasing mixture of OTT providers, Pay-TV operators and linear broadcasters competing for viewer attention, making that first 10 seconds or so of contact compelling is more important than ever.

The GDPR (and any future legislation based on it elsewhere) will have an impact on the ease with which the industry has used big data analytics to date, that is certain. But it is not necessary a cause for pessimism: regulation can be a good thing. Interviewed in one of the most influential posts from the formative eras of data analytics, the Harvard Business Review’s With Big Data Comes Big Responsibility, Alex ‘Sandy’ Pentland, the Toshiba Professor of Media Arts and Sciences at MIT compared it then to the early days of the financial industry:

“Look at the financial industry, for instance,” he said. “Starting in the 1800s, it was basically unregulated, and we had booms and busts that destroyed huge swaths of the economy and ruined many families and communities. That’s where we are with personal data. People say personal data is the new oil of the internet. What they mean is that it’s a new asset class, a new value, a new currency. And we don’t have the regulations to treat it like the value class it is. We need data banks. We need data auditing. We need them in order to avert huge disasters from breaches and attacks and class action suits.”

Which is where we are now.

Assessing the current state of the importance of big data analytics within the media industry, you are left with the impression of a field that is moving from a state of blindness to one of awareness. In the next phase, as its deployment becomes standardized and the legal framework surrounding it is strengthened, increased volumes of potential data can be captured. As the powerful new analytics capabilities driven by the adoption of machine learning come to the fore, we could well be moving towards an era of increased importance for data analytics, best characterized as enlightenment.