The Common Media Application Format (CMAF) looks all set to revolutionize the delivery of adaptive bitrate streams and lead to much lower OTT latency, enabling a better User Experience and reduced CDN Costs.

Whether sports or esports, gigs or cultural events, streaming live has become a steadily more important feature of the OTT landscape, as providers look to differentiate their offerings for the consumer.

With that has come the problem of latency. Sport in particular does not take place in a vacuum; any delay in the video signal can be cruelly highlighted by the speed with which information in the shape of goals scored or penalties missed can be transmitted via other routes, such as social media networks.

We’ve written about the problem of latency before, in particular the way that acquiring the necessary DRM licenses to play secured content can exacerbate it. At one point during the World Cup last year we were granting 1000 licenses every second via our VO Multi-DRM solution for a single broadband client, so you can see the scale of the problem. But efficient DRM is not the whole of the solution. What is starting to get the industry excited and is at the root of an increasing number of conversations with customers on our stand at tradeshows, is CMAF — the Common Media Application Format.

The Problem of OTT Latency

An informative whitepaper from Akamai details some of the figures involved and provides an indication why OTT latency has become such a problem.

First off, it’s worth looking at the sheer volume of traffic. Enough of the Internet’s video data runs across Akamai’s servers that its figures are usually considered a solid benchmark, and it says that OTT live streaming has grown 22,000-fold since 2001. That first stream, of all things a Victoria’s Secret webcast, came in at 1Gbps. By the time of the Russian World Cup in 2018, peak demand had grown to 23Tbps.

Of course, one of the major technologies that separates the two and has allowed that scale to be achieved has been the introduction of HTTP Adaptive Streaming (HAS) which used standard HTTP servers and TCP to deliver video. As Akamai states, this meant that content delivery networks (CDNs) could leverage the full capacity of their HTTP networks to deliver streaming content instead of relying upon smaller networks of dedicated streaming servers.

Early caution about the amount of traffic these early networks could handle led to a conservative approach to avoid buffering. So, when Apple introduced its HTTP Live Streaming (HLS) format, it recommended that content be divided into 10-second segments and be streamed with a three-segment starting buffer in the player. This effectively became the de facto standard for the industry. Even though Apple has subsequently lowered the segment length to six seconds, it has maintained the three-segment buffer requirement.

Add in video encoding, packaging, network delay, and decoding into the mix, and you get the following comparison between different forms of content delivery.

- Satellite latency: 3.5 - 12 seconds

- IPTV latency: 4 - 6 seconds

- Terrestrial Cable: 6 -12 seconds

- OTT: 25 - 40 seconds

This means that at a minimum, the OTT signal will lag 13 seconds behind the conventional broadcast signal, and often be much further behind. This amount of latency is not sustainable in the modern mixed-screen and mixed-delivery environment. Indeed, latency is identified as the biggest problem faced by video developers, cited by 55% of respondents globally in the benchmark 2018 Bitmovin Video Developer Report, and rising to 74% in the LATAM region.

Introducing CMAF

There are, of course, a few other complications as well. The other main HAS in operation is MPEG-DASH (Dynamic Application Streaming over HTTP), which features shorter segment times but isn’t supported by Apple’s Safari browser. Because it is put together in a slightly different way from HLS, this means that content has to be packaged in both formats if content owners want to reach the maximum number of devices. That also means both formats compete for space in CDNs, exacerbating the problem.

According to the previously mentioned Bitmovin report, HLS is used by 82% of developers, with 61% using MPEG-DASH. What can now be considered the legacy formats of RTMP, Smooth Streaming, and Progressive Streaming are still being used by 33%, 27%, and 23% respectively. But perhaps the most interesting statistic is that use of CMAF has nearly doubled over the previous year. It is still only 10%, but a further 23% plan to implement in over the coming year.

CMAF stands for Common Media Application Format (officially MPEG-A Part 19) and is potentially the answer to many of the current problems that bedevil the industry. Introduced by Apple and Microsoft in 2016 and finalized a year later, rather than being a rival to HLS or DASH, CMAF essentially is a container that can hold audiovisual data that can be distributed and read by both. HLS is still required in the Apple ecosystem and DASH is used everywhere else (i.e. Android), but using CMAF for delivery halves the number of files packaged and halves the number of files going over the CDN, also halving costs and doubling efficiency in one stroke.

Add in DRM to the equation, and, thanks to the introduction of CBCS encryption (technically AES-128 Cypher Block Chaining) it further removes complexity by allowing content owners to address devices with one single, encrypted media format. This is instead of the current situation of having to produce discreet Widevine, FairPlay, and PlayReady streams.

And to add further to the list of CMAF advantages, there are no technical barriers to its adoption; it uses existing technologies as its container format and retains compliance with non-CMAF systems.

In many ways it’s a no-brainer, and given the fact that its standard was only officially published in January 2018, its adoption has been rapid. But, for the moment, let us turn our focus on its advantages to the way it deals with latency, as that is one of the most pressing problems that needs solving with live video streaming.

OTT Latency: Where CMAF Wins

The segmented methods of encoding, distributing, and then decoding video content across the open internet have the unfortunate consequence of introducing additional latency at almost every stage. An encoder waits until it has encoded the last byte of a segment before uploading it; the CDN then waits to receive the last byte of a segment before forwarding the first byte; and the players wait to receive the last segment byte before decoding the first one. This is at a minimum; as we have already discussed Apple requires a three-segment buffer to proceed with a stream.

If transfer was instantaneous between these three processes, then latency for a 10-second segment would only be 10 seconds. But it’s not. Think of a queue of cars behind a traffic light. Theoretically all of them should be able to accelerate up to the same speed as soon as the light goes green, but there’s a lag between each car and it can take several seconds for even the third of fourth car to start moving after the first one has started to accelerate. Hence the fact that latency in an OTT delivery system can be up to 5x the segment length.

What is required is the ability to break those segments down. Simply using shorter segment times with current HAS technologies has proven to be a non-starter — picture quality suffers and CDNs loose stability — what is required is another method entirely.

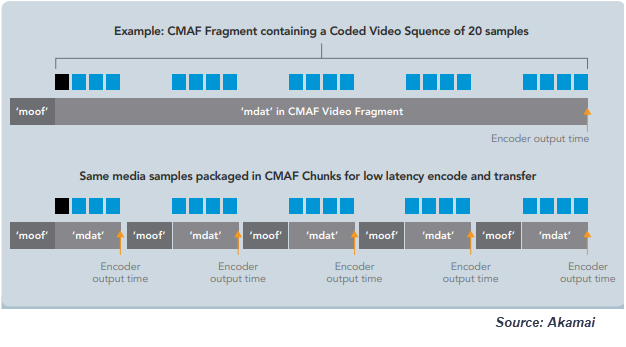

CMAF uses what is known as ‘chunked encoding’ and ‘chunked transfer encoding’. This breaks the video down into smaller chunks of a set duration that, to give a very broad-brush outline, can be published as soon as encoded and forwarded as soon as received. Delivery takes place in near real-time while later chunks are still being processed. All this means that latency and segment length are no longer inextricably linked.

There is a lot of technical detail that can be unpacked here. For a detailed summary of how chunked encoding and chunked transfer encoding takes place, the Akamai whitepaper Ultra-Low-Latency Streaming using Chunked-Encoded and Chunked-Transferred CMAF is the current industry reference.

There is a lot of technical detail that can be unpacked here. For a detailed summary of how chunked encoding and chunked transfer encoding takes place, the Akamai whitepaper Ultra-Low-Latency Streaming using Chunked-Encoded and Chunked-Transferred CMAF is the current industry reference.

Driving OTT Latency Down

Of course, hidden in that detail are several challenges to driving down OTT latency to sub-broadcast levels. To achieve true Ultra-Low-Latency CMAF (ULL-CMAF) an entire chain must be in place and working perfectly. For example, HTTP chunk encoding transfer needs to be used at each step in the distribution chain; HTML5 players operating in a browser must use Fetch; and the client needs to be able to cope with very low buffers.

How low can latency realistically go? In laboratory tests, latency has been driven down to as low as 600ms. And while that is unachievable in the real world, proof of concept testing there indicates a still better than broadcast 3 seconds is achievable. Impressively, 1.5-2 seconds of that is in the player buffer alone.

So, what needs to be done from this point to get to the low-latency future?

There are two stages. The first is CMAF itself, which is slowly becoming commercially deployable and, as we have seen, is seeing an impressive upsurge. Encoders, players, and CDNs are all available. That box can certainly be ticked by the end of this year.

Watch this webinar hosted by our partner, Harmonic to learn about Low Latency & High QoE for Live Streaming.

The second stage is the drive towards ULL-CMAF and that will take a bit more time. The entire streaming pipeline must be optimized from packager to video player, and there are still challenges to overcome. Some legacy devices will not be able to support chunked encoding and/or CBCS encryption; the rapid growth and importance of Dynamic Ad Insertion needs to be considered as currently an overhaul will be needed for those systems to be able to react speedily enough to serve adverts in a low-latency environment; and there is also further potential ad insertion confusion regarding the dual DASH-manifest and HLS-playlist nature of the format.

But the future is positive and the advantage of a standards-based approach to low-latency is that it becomes an industry-wide approach as well. Over the next few years the hope is that OTT latency, and the issue of ABR format fragmentation as a whole, will simply disappear from view.