Artificial Intelligence use cases are growing all the time, and one of the particularly powerful ways we are using AI is to combat video piracy.

We’ve been working with AI a lot at VO over recent years. Like the rest of the industry we are finding that it solves problems in many areas and provides a significant boost to many business processes. Today though, we’re going to look at how we are using it to fight video piracy and how its impact depends to a large extent on expertise that is already present in a company.

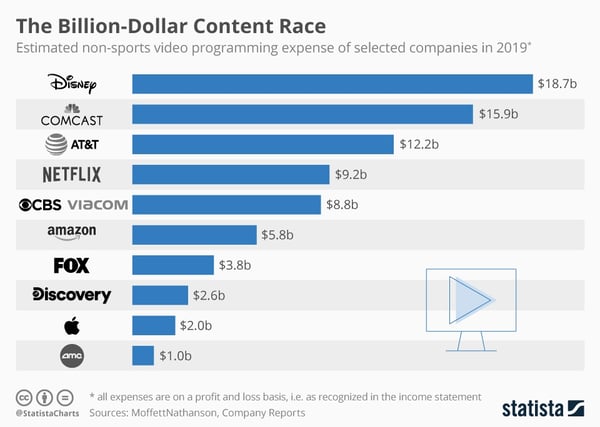

First though, some context, and what has become known as the billion dollar content race. The amount of money being spent on non-sports video programming is extraordinary. Once upon a time it was the great networks and national public broadcasters that provided the largest investment in content. As the chart below shows, that has changed.

Combined, those 10 companies alone had an estimated content budget in 2019 of $80 billion. This is a lot of money and video pirates, like any criminals, tend to be attracted to lots of money.

They were also, at the time anyway, operating a unique service in that it was video pirates that were the first to aggregate content from multiple providers together and offer it in a low cost, albeit illegal, bundle. This has been extraordinarily attractive to consumers though, of course, now operators can offer their own, legitimate aggregated services via solutions such as our own VO Super Aggregator.

The sheer amount of money involved though led the video pirates to evolve and iterate rapidly. New forms of attack have been pioneered in recent times, such as restreaming via HDMI and organised credential sharing, while the distribution of pirated video has undergone a game-changing transformation.

Once upon a time, accessing illegal material via techniques such as bit torrents involved at least a small amount of computer knowledge. Illegal content now can be delivered easily and cheaply via exactly the same OTT style of delivery that the likes of Netflix use. Pirates even make copycat replicas of legal applications to further short circuit the process of developing their own tools.

Different techniques have been developed on our side of the industry to fight against this, such as Dynamic Watermarking that sees us able to take down live events as they are being restreamed in realtime.

Here though, I want to show you briefly how we use AI to combat piracy. And, as with many things in the modern era, it hinges on being able to analyse enormous amounts of data and pull the right relevant patterns out from how it’s being used.

AI, Video Piracy, and Big Data

It is not just legal activity that generates impressive amounts of data. The effective monitoring of illegal activity also provides us with a huge amount of material to work with.

The volume is impressive.

1.5 billion — new suspicious links analysed per month

1600 streaming links that can be identified for a single popular sporting event

1TB logs collected per day

This is a lot of data to work with. And it is here that AI comes in, in that it enables us to execute a heuristic approach to working through the tsunami of information that is passing across our servers and swiftly and accurately identify patterns.

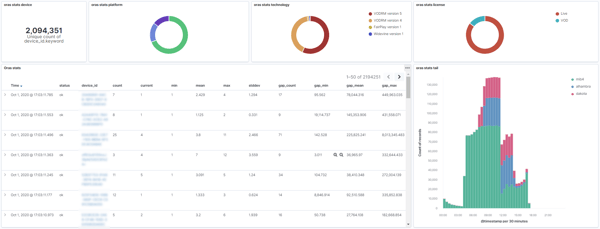

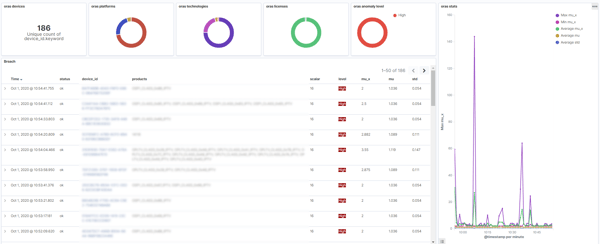

We work through both security data and viewership data to identify patterns using our AI-based fraud detection system.

Our technology computes in real-time the features that characterize device and consumer behaviors. All results can be injected into popular SIEM products, eg. Elastic Security or Splunk so that we can seamlessly integrate our technology into our customers’ existing SOC.

We use rule-based detection over the features first. Rules based on known attack scenarios are input into the system and, when similar events occur, they are flagged up as anomalous and deserving of attention.

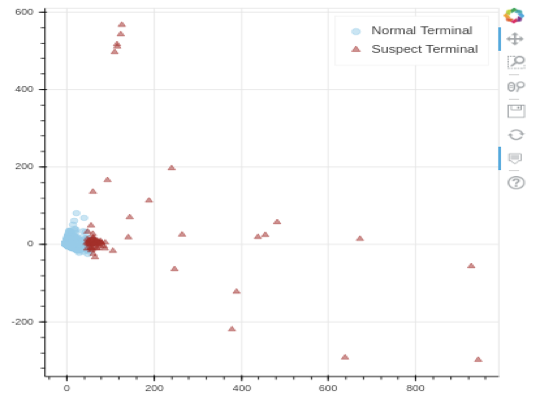

Semi-supervised and unsupervised anomaly detection algorithms are also used, which look at unusual data patterns and equally then flag them up for human operators.

There are all deployed alongside unique visualisation and contextualisation tools that help us further refine the accuracy of the process.

While AI is an extremely useful tool in detecting piracy activity, there are some challenges to its use, however, with many of them focusing on training. The maxim Garbage In, Garbage Out has never been more relevant than when training AI systems as, if fed the wrong initial starting assumptions, the end results can be increasingly divergent from the real world data.

This is where human and organisational expertise comes in. There are three main issues to contend with.

- Defining what is normal and what constitutes an outlier

- There is often a shortage of accurately labelled data that can be used for training purposes (though technologies such as watermarking can be used to validate the algorithm)

- Consumer behaviour keeps evolving. What is abnormal one year can be normal the next

AI = Awesome Inputs

There is a lot of money riding on the battle against content piracy. $80 billion was spent by 10 companies alone in a single year on producing content for an increasingly voracious audience. And whole Covid-19 will inevitably have led to a shrinkage or production spending in 2020 — it is hard to spend money when you can’t physically get on-set — the sharp uptake in viewing figures and demand for yet more content means that budget is only likely to grow in the short term.

It is a lot of money to invest and a lot of money to lose. Even if those 10 companies were making no profit and that investment was translated entirely to revenue, losing just 1% of that to piracy would be a loss of $800 million a year, every year. This is why investment in AI to help combat piracy is also running at a high level.

But it is important to note that the inputs have to be correct to begin with. There is little point investing in AI solutions that are running off of faulty assumptions or not keeping abreast of changes in viewing behaviours, known attack scenarios, and/or weaknesses that could flag up false positives. When it comes down to it, an AI-based anti piracy solution is reliant on not just the AI itself but the expertise— in this case security expertise — that helps shape it and train it and keeps it updated.

To find out more about VO’s AI-driven anti piracy solutions, click here.